AI security and governance: from principles to practice

Secure and govern all AI from code to production. Eliminate blind spots across AI agents, models, data flows, and third-party tools with continuous monitoring, posture management, and compliance automation.

Featured

Automating AI documentation and moving beyond manual questionnaires

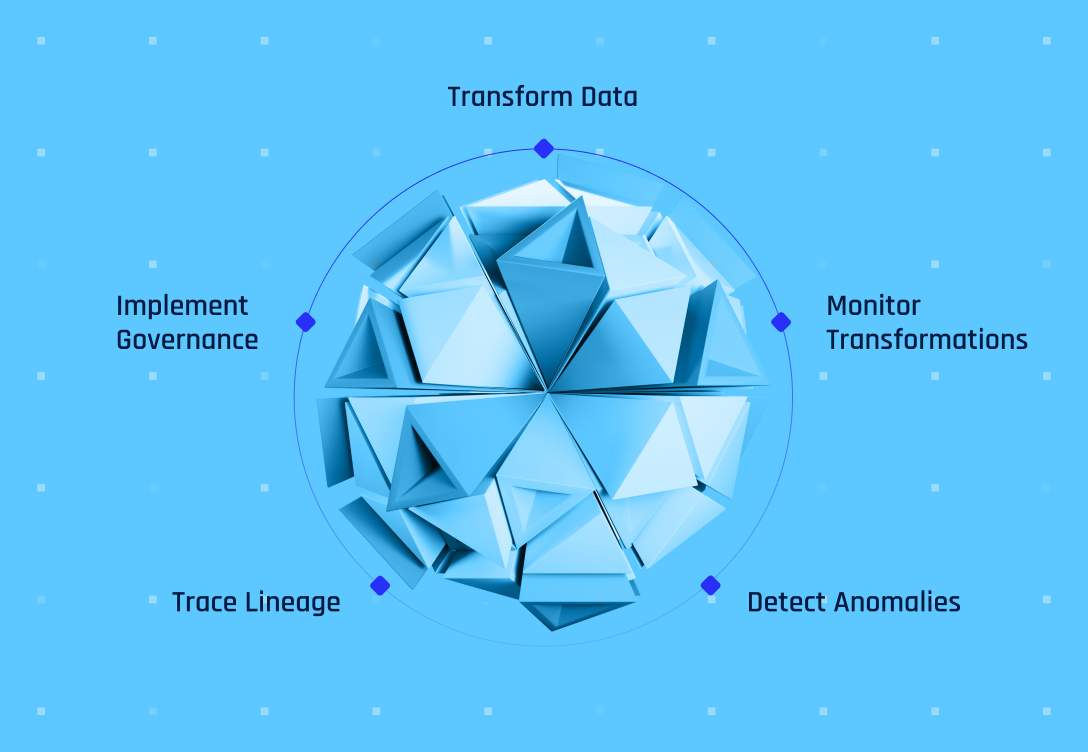

The hidden risk of transformed data in AI models

Explore related resources

Effective AI governance begins with data flow monitoring

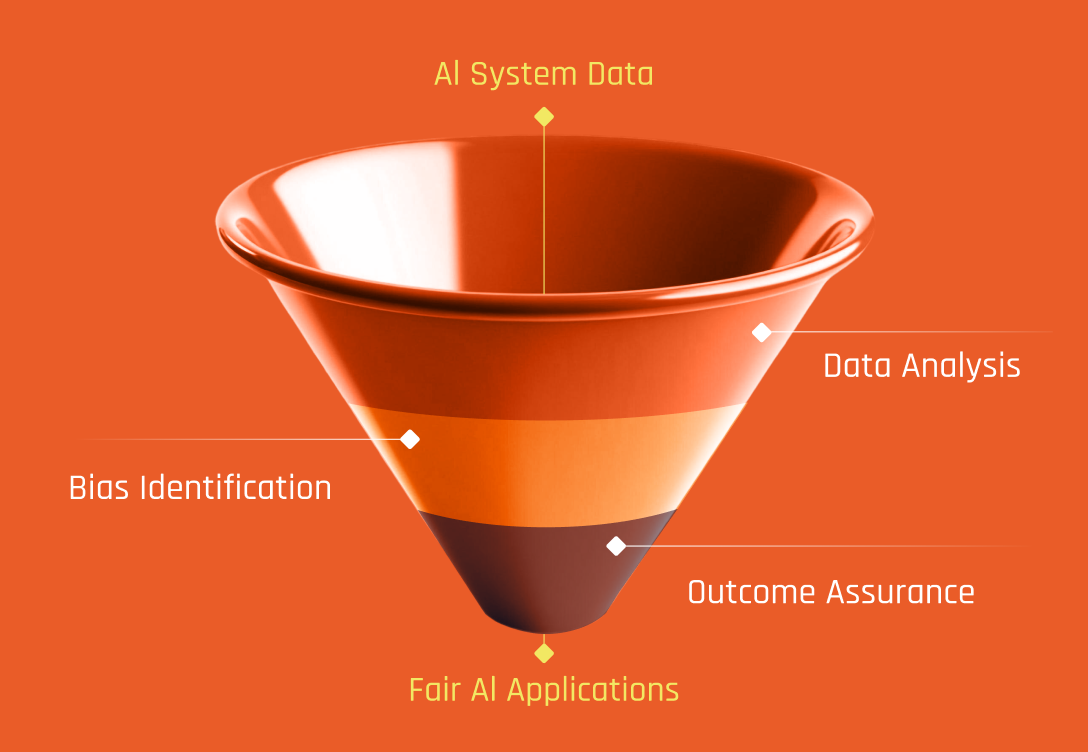

Automated AI bias detection without manual assessments

How proactive AI incident response protects your future

Watch: Track fast-moving sensitive data

AI Governance FAQ

What is AI security and how is it different from AI governance?

AI security focuses on protecting AI systems, models, data, and agents from threats including data poisoning, prompt injection, model theft, unauthorized access, and supply chain compromise. AI governance is the framework of policies, oversight, and compliance controls that ensures AI is deployed responsibly. Security is how you defend AI systems. Governance is how you manage them. In practice, they are deeply connected: you cannot govern what you cannot secure, and security controls need governance frameworks to be applied consistently. An effective program addresses both.

What are the biggest AI security threats in 2026?

The most significant AI security threats facing enterprises include data poisoning (corrupting training data to manipulate AI outputs), agentic AI risk (autonomous agents with overprivileged access acting as insider threats), AI supply chain compromise (vulnerabilities in third-party models, MCP servers, and open-source components), prompt injection (manipulating AI agents into executing unauthorized actions), and identity exploitation (AI-generated deepfakes and credential abuse). The shift from generative AI to agentic AI has fundamentally expanded the attack surface because agents don't just generate content, they execute actions with real-world consequences.

What is AI security posture management (AI-SPM)?

AI security posture management is the practice of continuously discovering, assessing, and managing the security risks across an organization's AI assets, including models, agents, data pipelines, and third-party AI tools. AI-SPM provides visibility into your AI footprint, identifies misconfigurations and overprivileged access, and monitors for policy violations. It is one component of a comprehensive AI security program. Relyance AI treats AI-SPM as a capability within its broader data defense platform, not a standalone tool, because posture awareness without context, remediation, and enforcement is just a starting point.

What is AI governance and why do companies need it?

AI governance is a comprehensive framework of policies, processes, and oversight mechanisms that ensures organizations develop and deploy artificial intelligence systems safely, ethically, and in compliance with regulations. It functions like corporate governance but addresses AI-specific challenges including model bias, data security, and algorithmic accountability.

Companies need AI governance in 2025 because regulatory deadlines like the EU AI Act are now enforceable with steep penalties for non-compliance, AI deployment has moved from experimental to mission-critical operations, and stakeholders increasingly demand transparency and responsible AI practices. With over 90% of organizations increasing AI investment but less than 15% having mature governance programs, this gap represents significant reputational, financial, and compliance risk. Effective governance provides the guardrails that enable teams to innovate safely while managing bias, drift, security vulnerabilities, and regulatory obligations.

What are the main requirements of the EU AI Act for high-risk AI systems?

The EU AI Act imposes strict obligations on providers of high-risk AI systems across five key areas. Organizations must establish a continuous risk management system throughout the entire AI lifecycle, implement rigorous data governance to ensure training data meets quality standards and minimize discriminatory outcomes, and create comprehensive technical documentation before market deployment.

Additionally, high-risk systems must be designed for transparency and effective human oversight, allowing operators to intervene, override, or shut down the system when necessary.

Finally, Article 15 mandates that systems achieve appropriate levels of accuracy, robustness against errors and faults, and cybersecurity protections. For general-purpose AI models, critical compliance deadlines hit August 2, 2025, requiring providers to maintain technical documentation, disclose model capabilities and limitations, establish copyright policies, and publish training data summaries.

How do you measure and reduce AI risk in production models?

AI security focuses on protecting AI systems, models, data, and agents from threats including data poisoning, prompt injection, model theft, unauthorized access, and supply chain compromise. AI governance is the framework of policies, oversight, and compliance controls that ensures AI is deployed responsibly. Security is how you defend AI systems. Governance is how you manage them. In practice, they are deeply connected: you cannot govern what you cannot secure, and security controls need governance frameworks to be applied consistently. An effective program addresses both.

.png)